New vSAN 6.6 home lab

More than a year ago, I upgraded my home lab to get started with vSAN. Back then I decided to go for a small hardware upgrade and already feared that the new resources will lack again very soon. And of course that happened after a short time, when I installed the vSAN cluster.

Back then I already heard about the Xeon D product family, but didn't want to invest on it as prices were really high. Things have changed now, prices decreased and so I purchased two systems to have more resources this time. In comparison with my previous setup, both servers can address up to 128 GB of memory instead of 32 GB. So in summary, the 2-node cluster can be expanded to up to 256 GB of memory.

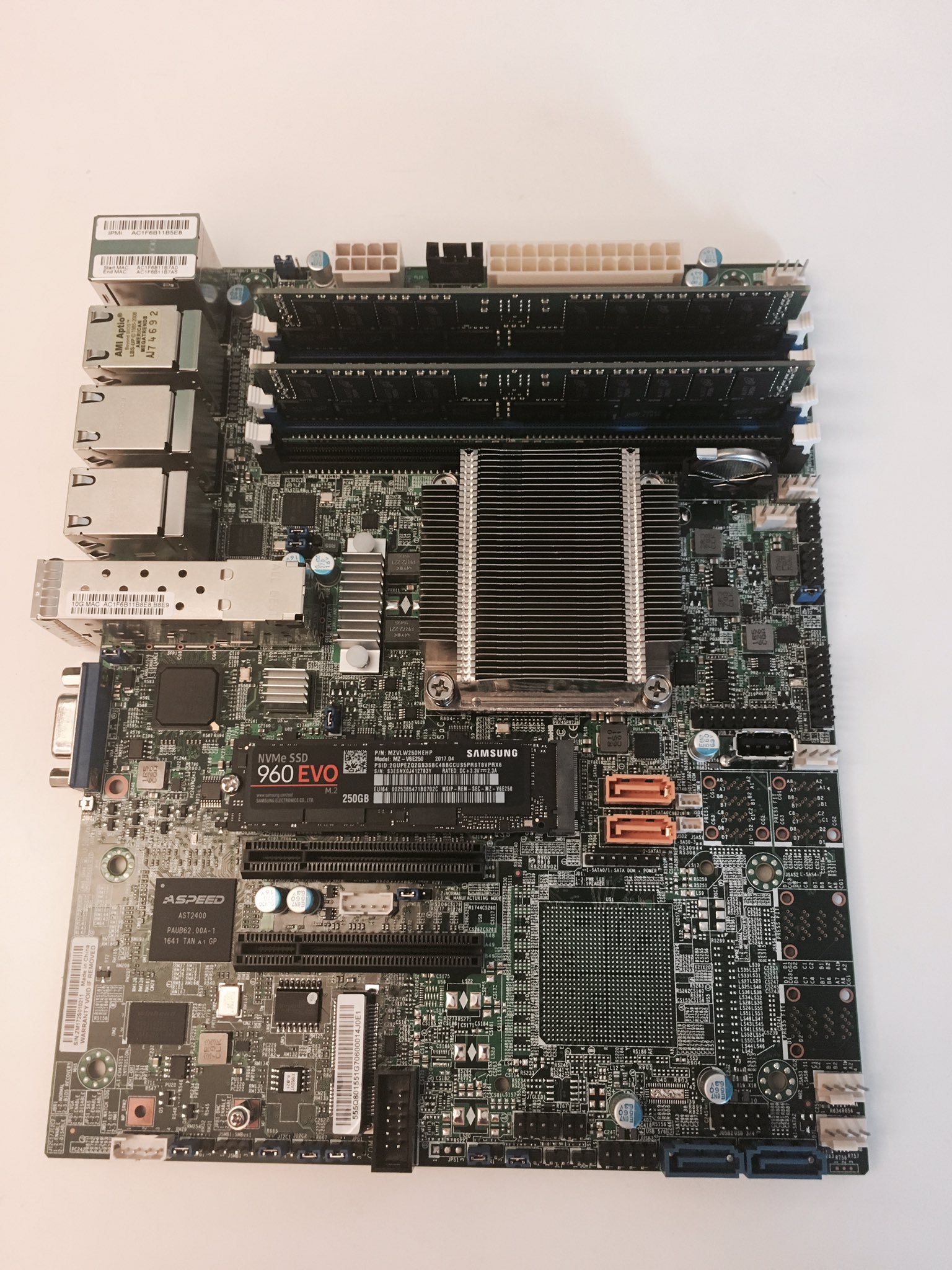

I also focussed on a little SSD upgrade. In my last systems, I started with a hybrid setup, but switched to two SATA SSDs later. My new systems use NVMe (cache layer) and SATA SSDs (capacity layer).

Supermicro offers plenty of Xeon D-based mainboards and also pre-assembled solutions - which really makes getting an overview hard. Luckily there a some hardware gurus in the community that love to share their knowledge. After exchanging experiences with Mark Brookfield and Erik Bussink, I decided to get the following components:

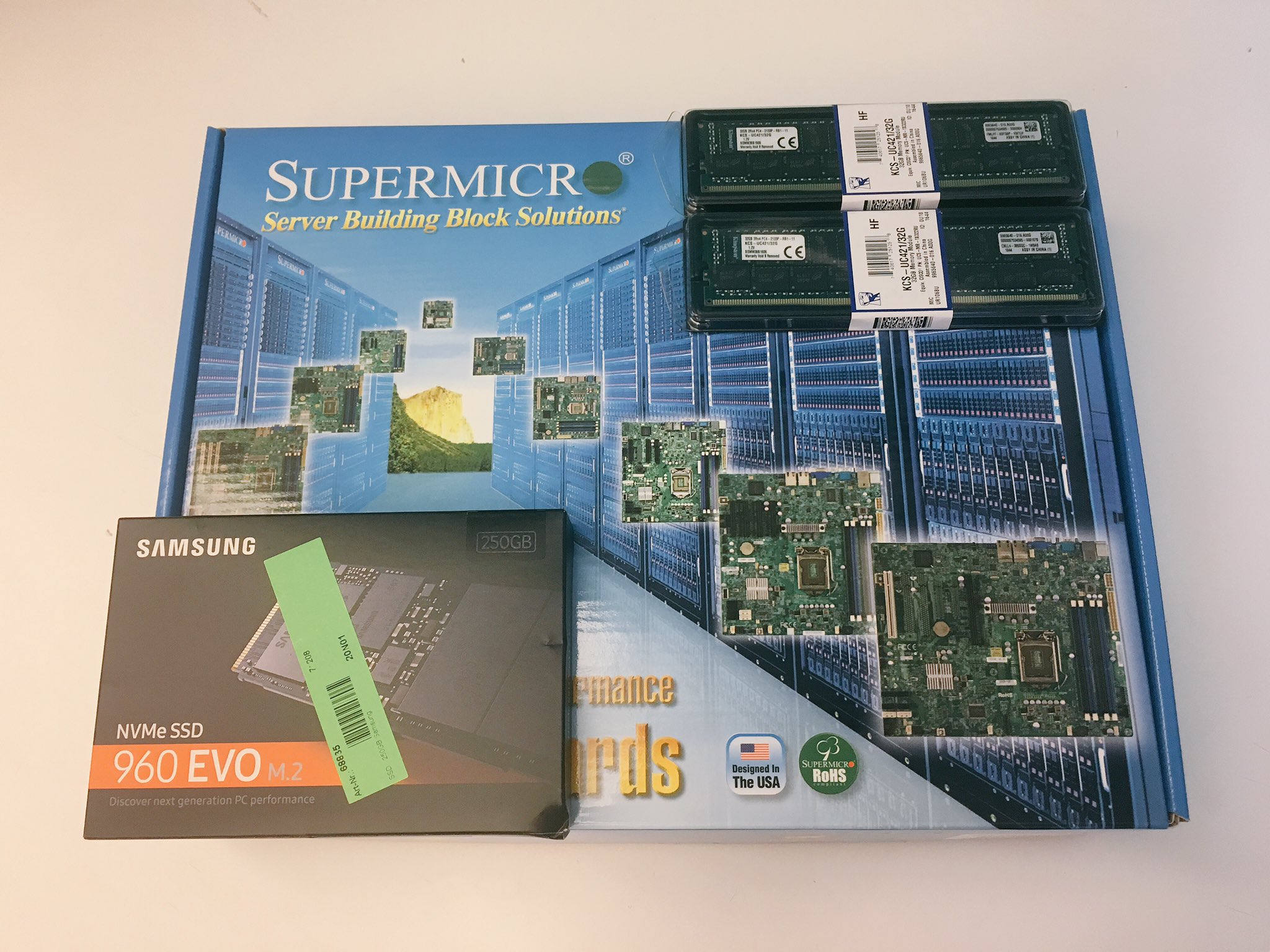

| Part | Product | Link | Price (Germany) |

| Mainboard | Supermicro X10SDV-TP8F (MBD-X10SDV-TP8F-O) | [click me!] | 530 € |

| Memory | 2x 32 GB DDR4 RDIMM ECC (manufacturer depending on daily price) | ~550 € | |

| Capacity SSD | OCZ Trion 150 480 GB (pre-existing) | ||

| Cache SSD | Samsung SSD 960 EVO 250GB (MZ-V6E250BW) | [click me!] | 120 € |

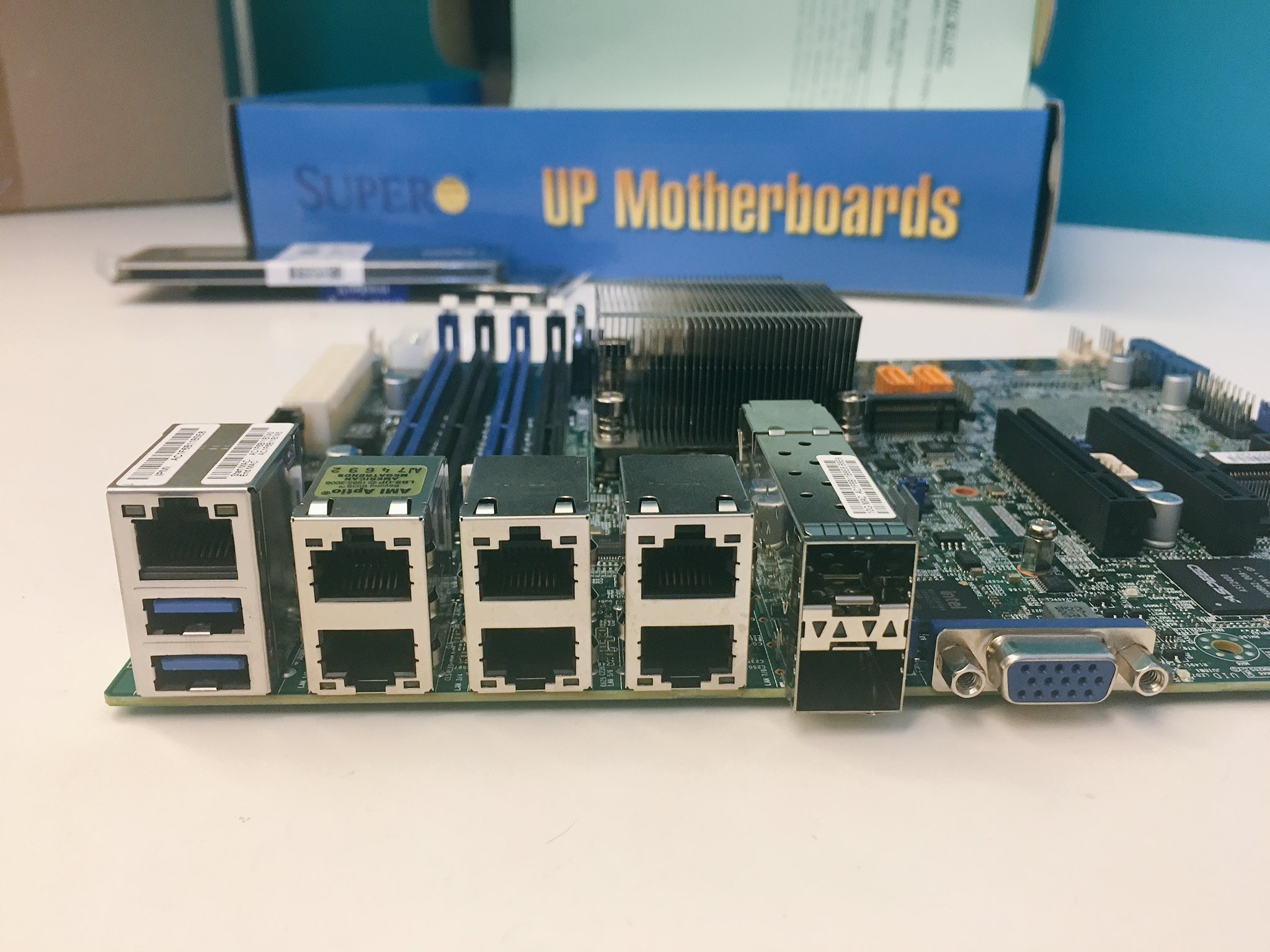

When selecting the mainboard, I focussed on the following:

- Xeon quad core processor

- 10 GbE ports (vSAN/vMotion backend)

- NVMe slots for SSDs

- IPMI / OOBM for remote management

Keeping this in mind, the X10SDV-TP8F was the cheapest mainboard available in Germany during my researches. During shopping you really should ensure not accidentally buying the X10SDV-2C-TP8F - this one only offers a Pentium dual core CPU.

Supermicro also offers pre-assembled systems with Xeon D processors. As they were not a price alternative to my do-it-yourself setup and I still had some cases and power supplies, I was not focussing them:

| Product | Features | Link | Price (Germany) |

| SuperServer E200-8D | 6 core, 2x 1G, 2x 10GBase-T | [click me!] | ~1000 € |

| SuperServer E300-8D | 4 core, 6x 1G, 2x 10G SFP+ | [click me!] | ~800 € |

| SYS-5018D-FN8T | 4 core, 6x 1G, 2x 10G SFP+ | [click me!] | ~1000 € |

The last two products use exactly the same mainboard (X10SDV-TP8F) I selected for my do-it-yourself setup.

Usually I'm only using Kingston memory for my systems. For the first system, I ordered Kingston modules - but when buying the second node (I bought those systems with one month of delay), prices increased heavily; so I decided to order Videoseven modules instead. To be honest, I did not know that Videoseven was still existing at this point - the last products I remember were screens and graphic cards back then in the 90s. 😄 I don't have any long-term experices, yet - but those memory modules work very fine for me. The build quality looks very good. I decided to go for RDIMM modules to have the option to upgrade to additional memory later.

Regarding the SSDs I decided to order NVMe SSDs for the vSAN cache layer as those SSD prices have decreased heavily in the last months. The capacity layer SSDs I ordered for the last setup will be re-used..

Erik gave me a hint that it might be a good idea to remove a screw before assembling the mainboard if you want to attach a mSATA SSD later. Again, thanks for sharing!

Some pictures of the parts:

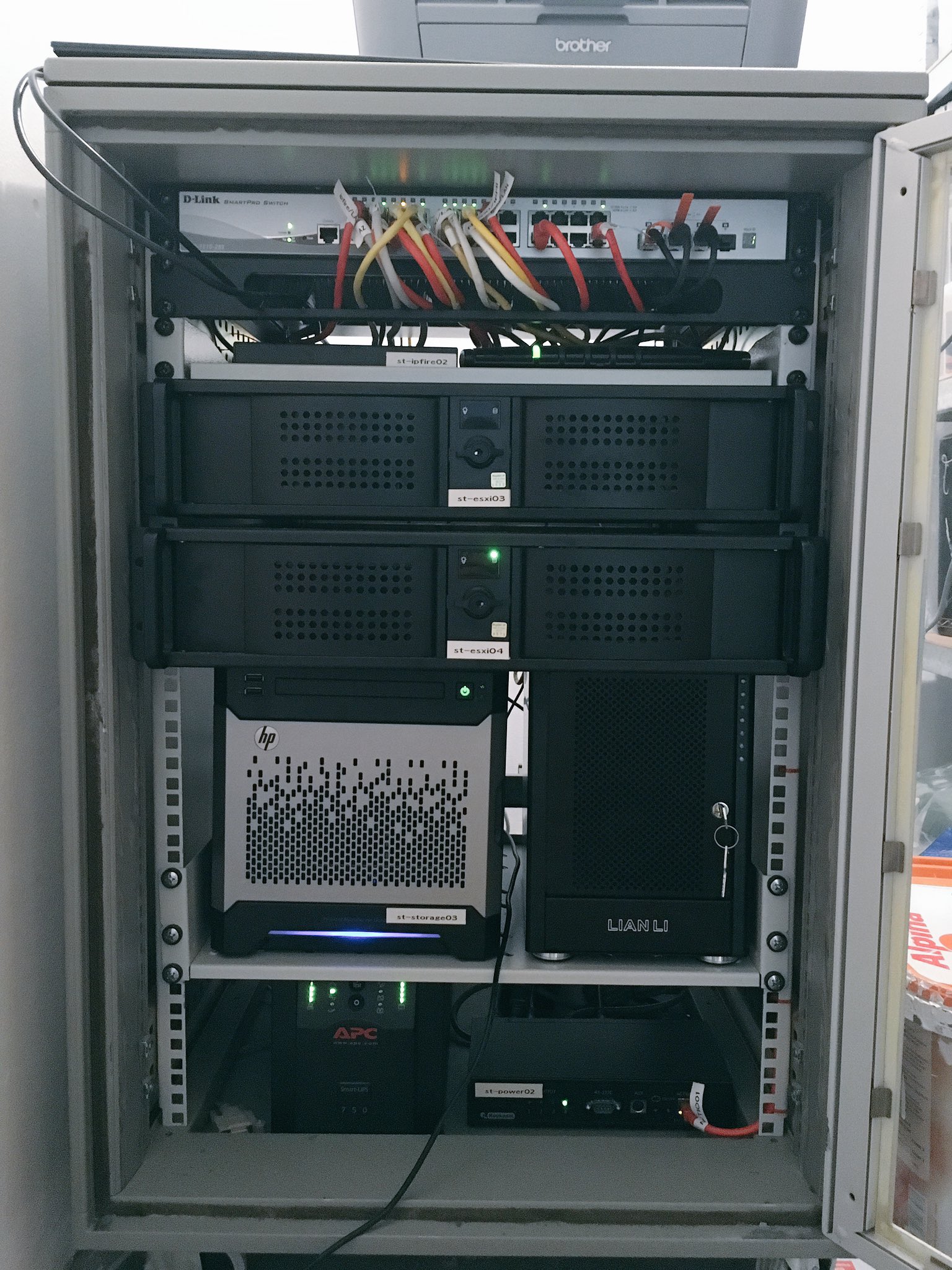

Conclusion

There are plenty of things that could be optimized. As an example, my setup is not VMware HCL-compliant, which is announced using an alarm within vCenter Server.

To fix this, it is necessary to order a certified controller - it looks like that the LSI 9207-8i and LSI 9300-8i controllers are pretty decent ones. The first one seems to be a good budget alternative. Another problem is that my rack space is leaning - currently I'm using 2U cases, an option would be to use 1U cases instead. This would enable me installing a third system once memory or vSAN resources are lacking again. 🙂